Desktop GPU roadmap: Nvidia Rubin, AMD UDNA & Intel Xe3 Celestial

Source

Published

TL;DR

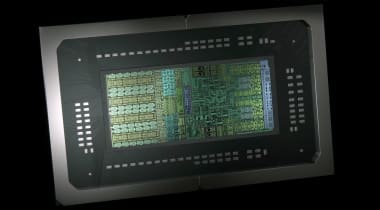

AI GeneratedThe article discusses the latest developments in desktop GPU technology from Nvidia, AMD, and Intel. Nvidia's upcoming Rubin architecture is expected to debut in late 2026, featuring significant improvements in transistor density and power efficiency. AMD is transitioning to its UDNA architecture, aiming for a unified design targeting both gaming and compute workloads, expected to launch in late 2026. Intel's Xe3 Celestial architecture is progressing towards volume production, likely to debut in early 2027. Each company is focusing on advancements in AI acceleration, memory technologies, and architectural enhancements to push the boundaries of desktop GPU capabilities in the coming years.